Design and Implementation of Ultra-Low-Latency Video Encoder Using High-Level Synthesis

PubDate: February 2020

Teams: Nihon University

Writers: Kosuke Fukava; Kaito Mori; Kousuke Imamura; Yoshio Matsuda; Tetsuya Matsumura; Seiji Mochizuki

PDF: Design and Implementation of Ultra-Low-Latency Video Encoder Using High-Level Synthesis

Abstract

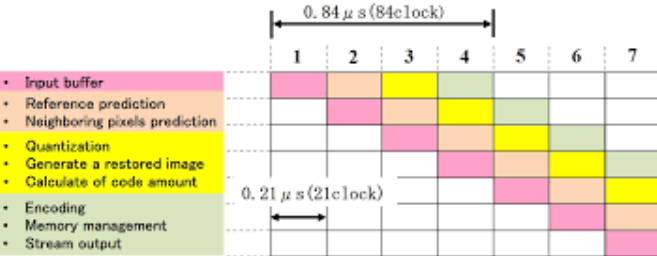

For real-time applications such as autonomous driving and virtual reality (VR), we previously proposed an ultra-low-latency video coding method, which adopts line-based processing for Full-HD video. In this paper, we newly propose a design and implementation of the ultra-low-latency video encoder. In order to reduce the hardware amount, image-prediction specification is optimized for our previous work. Applying a high-level synthesis (HLS) design methodology for Xilinx FPGA, the implementation results of logic count with 10,677 LUTs, 3,714FFs and 66 DSPs is obtained. The implemented video encoder achieves less than 1.0 μs low-latency and compression to 39.4% without significant visual degradation. As a result, cost effective ultra-low-latency video encoder is implemented for low cost FPGA.