Haptic Force Guided Sound Synthesis in Multisensory Virtual Reality (VR) Simulation for Rigid-Fluid Interaction

PubDate: August 2019

Teams: Tianjin University

Writers: Haonan Cheng; Shiguang Liu

Abstract

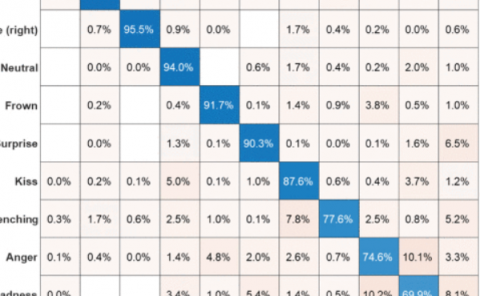

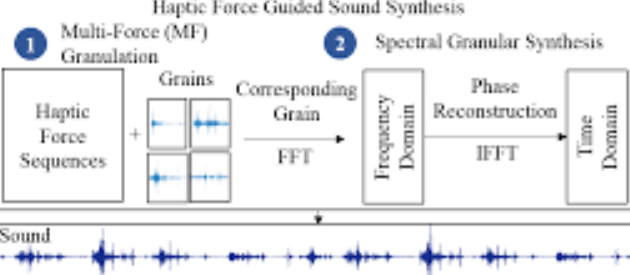

This paper tackles a challenging problem for interactive rigid-fluid interaction sound synthesis. One core issue of the rigid-fluid interaction in multisensory VR system is how to balance the algorithm efficiency, result authenticity and result synchronization. Since the sampling rate of audio is far greater than visual and haptic modalities, sound synthesis for a multisensory VR system is more difficult than visual simulation and haptic rendering, which still remains an open challenge until now. Therefore, this paper focuses on developing an efficient sound synthesis method tailored for a multisensory system. To improve the result authenticity while ensuring real time performance and result synchronization, we propose a novel haptic force guided granular sound synthesis method tailored for sounding in multisensory VR systems. To the best of our knowledge, this is the first step that exploits haptic force feedback from the tactile channel for guiding sound synthesis in a multisensory VR system. Specifically, we propose a modified spectral granular sound synthesis method, which can ensure real time simulation and improve the result authenticity as well. Then, to balance the algorithm efficiency and result synchronization, we design a multi-force (MF) granulation algorithm which avoids repeated analysis of fluid particle motion and thereby improves the synchronization performance. Various results show that the proposed sound synthesis method effectively overcomes the limitations of existing methods in terms of audio modality, which has great potential to provide powerful technological support for building a more immersive multisensory VR system.