Improving Remote Environment Visualization through 360 6DoF Multi-sensor Fusion for VR Telerobotics

PubDate: March 2021

Teams: Brown University

Writers: Austin Sumigray;Eliot Laidlaw;James Tompkin;Stefanie Tellex

PDF: Improving Remote Environment Visualization through 360 6DoF Multi-sensor Fusion for VR Telerobotics

Abstract

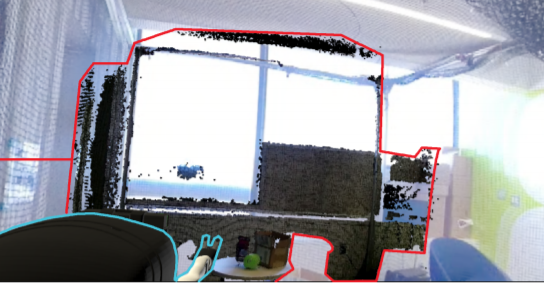

Teleoperations requires both a robust set of controls and the right balance of sensory data to allow task completion without overwhelming the user. Previous work has mostly focused on using depth cameras that fail to provide situational awareness. We have developed a teleoperation system that integrates 360° camera data in addition to the more standard depth data. We infer depth from the 360° camera data and use that to render it in VR, which allows for six degree of freedom viewing. We use a virtual gantry control mechanism, and also provide a menu with which the user can choose which rendering schemes to render the robot’s environment with. We hypothesize that this approach will increase the speed and accuracy with which the user can teleoperate the robot.