Adaptive 360-degree video streaming using layered video coding

PubDate: April 2017

Teams: The University of Texas at Dallas

Writers: Afshin Taghavi Nasrabadi; Anahita Mahzari; Joseph D. Beshay; Ravi Prakash

PDF: Adaptive 360-degree video streaming using layered video coding

Abstract

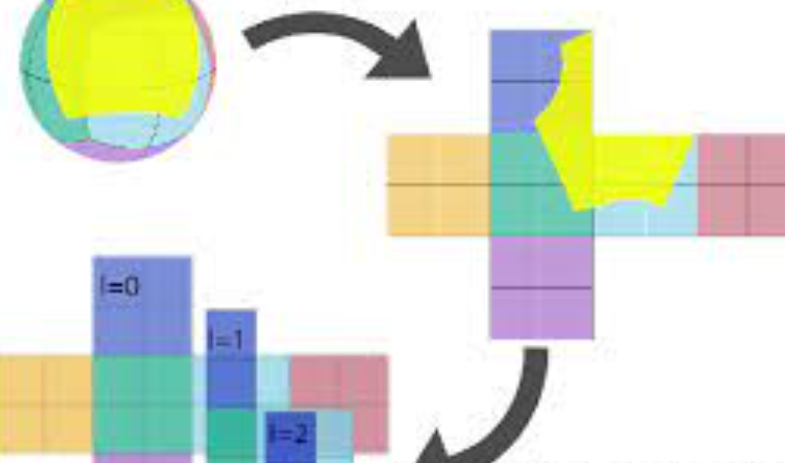

Virtual reality and 360-degree video streaming are growing rapidly; however, streaming 360-degree video is very challenging due to high bandwidth requirements. To address this problem, the video quality is adjusted according to the user viewport prediction. High quality video is only streamed for the user viewport, reducing the overall bandwidth consumption. Existing solutions use shallow buffers limited by the accuracy of viewport prediction. Therefore, playback is prone to video freezes which are very destructive for the Quality of Experience (QoE). We propose using layered encoding for 360-degree video to improve QoE by reducing the probability of video freezes and the latency of response to the user head movements. Moreover, this scheme reduces the storage requirements significantly and improves in-network cache performance.