Scene-aware Sound Rendering in Virtual and Real Worlds

PubDate: May 2020

Teams: University of Maryland

Writers: Zhenyu Tang; Dinesh Manocha

PDF: Scene-aware Sound Rendering in Virtual and Real Worlds

Abstract

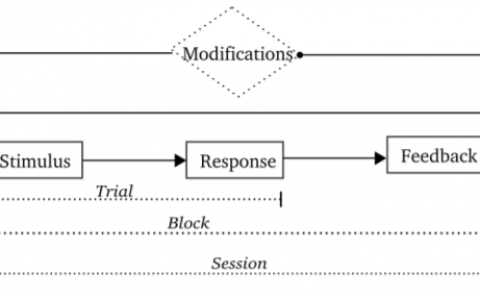

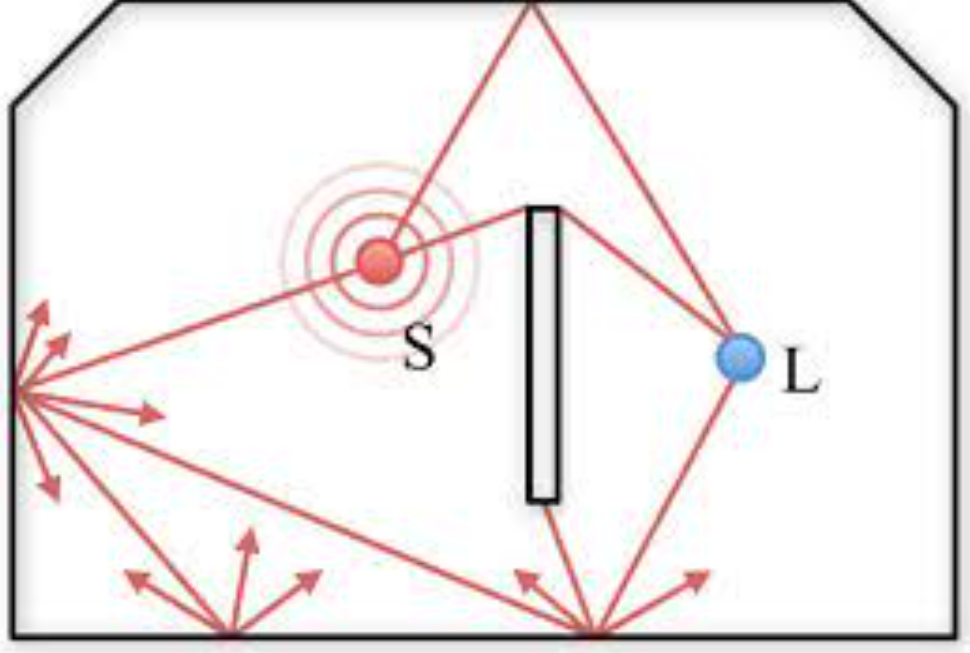

Modern computer graphics applications including virtual reality (VR) and augmented reality (AR) have adopted techniques for both visual rendering and audio rendering. While visual rendering can already synthesize virtual objects into the real world seamlessly, it remains difficult to correctly blend virtual sound with real-world sound using state-of-the-art audio rendering. When the virtual sound is generated unaware of the scene, the corresponding application becomes less immersive, especially for AR. In this position paper, we present our current work on generating scene-aware sound using ray-tracing based simulation combined with deep learning and optimization.