Saccadic Eye Movement Classification Using ExG Sensors Embedded into a Virtual Reality Headset

PubDate: December 2020

Teams: University of Québec

Writers: Marc-Antoine Moinnereau; Alcyr Oliveira; Tiago H. Falk

PDF: Saccadic Eye Movement Classification Using ExG Sensors Embedded into a Virtual Reality Headset

Abstract

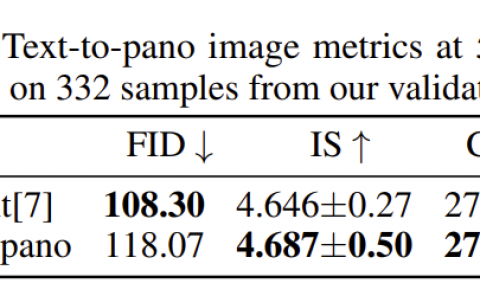

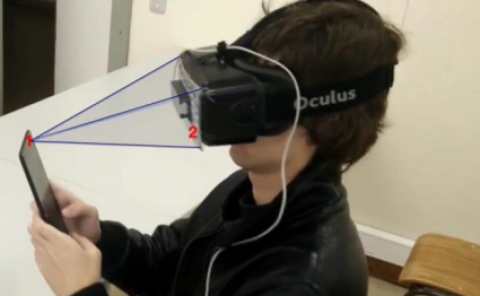

Measuring saccadic eye movements when wearing a virtual reality (VR) head-mounted display (HMD) has recently gained a lot of attention, as it allows for enriched user experiences. This has led to an increase in devices showcasing camera-based eye tracking capabilities. Such devices, however, can be orders of magnitude more expensive than conventional off-the-shelf HMDs. In this study, we explore the use of low-cost sensors embedded directly into the faceplate of the HMD to measure electroencephalography (EEG) and electrooculography (EOG) signals. In a “do-it-yourself” manner, we rely on the openBCI biosignal amplifier for data acquisition. A 7-channel system was tested on four participants who attended visually to a moving target in their field-of-view that moved every 10 degrees over a circumference. Time series and handcrafted features were extracted from the measured ExG signals and served as input to two different classifiers: support vector machine (SVM) and a multilayer perceptron (MLP). A hierarchical classification approach was proposed and found to achieve the best results with the fusion of both features sets, resulting in an average accuracy of 76.51% with an SVM. The results are encouraging and suggest that accurate, low-cost classification of saccadic eye movements may be possible.