Wearable RemoteFusion: A Mixed Reality Remote Collaboration System with Local Eye Gaze and Remote Hand Gesture Sharing

PubDate: January 2020

Teams: University of Auckland;University of Canterbury

Writers: Prasanth Sasikumar; Lei Gao; Huidong Bai; Mark Billinghurst

Abstract

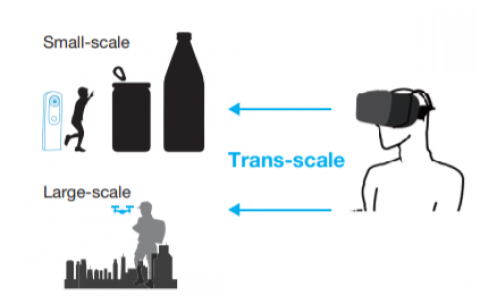

We present a wearable Mixed Reality (MR) remote collaboration system called Wearable RemoteFusion. The system supports spatial annotation and view frustum sharing, and enables natural non-verbal communication cues (eye gaze and hand gesture) for visual assistance in a stitched live dense scene. We describe the design and implementation details of the prototype system, and report on a pilot user study investigating how sharing natural gaze and gesture cues affects collaborative performance and the user experience. We found that by sharing augmented natural cues like the local eye gaze and remote hand gesture, participants had a stronger feeling of Co-presence, and the remote user could guide the local user to complete tasks with less physical workload. We discuss implications for collaborative interface design and directions for future research.