Distributed Refinement of Large-Scale 3D Mesh for Accurate Multi-View Reconstruction

PubDate: May 2019

Teams: Beihang University

Writers: Qing Luo; Yao Li; Yue Qi

PDF: Distributed Refinement of Large-Scale 3D Mesh for Accurate Multi-View Reconstruction

Abstract

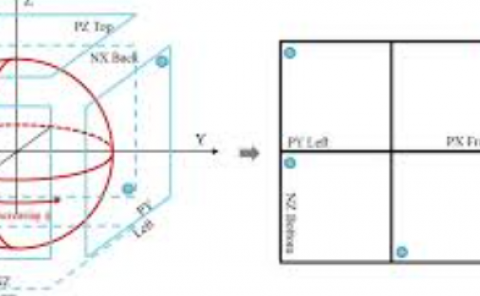

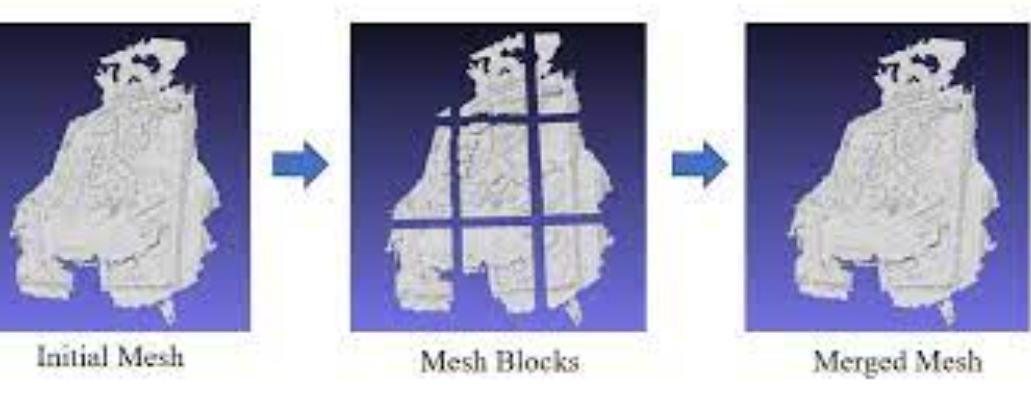

As the scene of multi-view reconstruction becomes larger, a single machine can no longer satisfy the refinement of 3D mesh in large scenes including mesh simplification, subdivision, smoothness and recovering meaningful details. In this paper, We propose a distributed method to refine a large-scale 3D mesh for accurate multiview reconstruction. First, we divide the initial mesh into blocks directly, which can utilize computing power of each computer. And then we make simplification and subdivision on those blocks, which can reduce mesh’s noise and remove redundant vertices, so as to generate a high quality mesh where the difference of the size of each edge is not too large. Next, we propose to split a graph consisting of multiple images in order to minimize the overlapped image data in each block. Finally, we use distributed variational surface refinement algorithm to capture meaningful details of mesh. The experiments on both public large scale data-sets and our very large scale aerial photo sets demonstrate that the proposed distributed method is fast and robust, and is suitable for all kinds of large scene areas.