Event Based, Near-Eye Gaze Tracking Beyond 10,000Hz

PubDate: April 2021

Teams: University of California, Berkeley,Stanford University,KTH Royal Institute of Technology

Writers: Bhuvaneswari Sarupuri; Simon Hoermann; Mary C. Whitton; Robert W. Lindeman

PDF: Event Based, Near-Eye Gaze Tracking Beyond 10,000Hz

Abstract

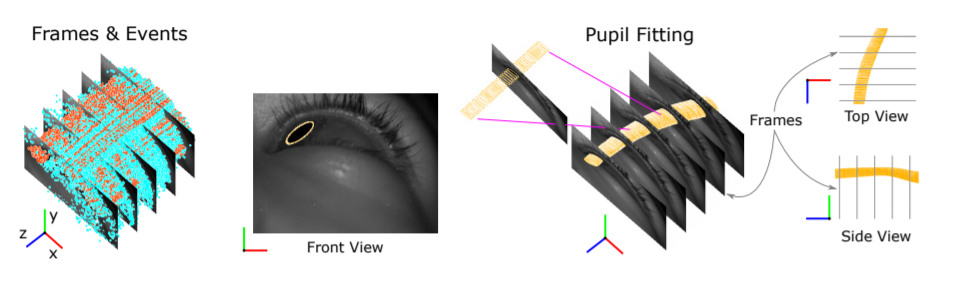

The cameras in modern gaze-tracking systems suffer from fundamental bandwidth and power limitations, constraining data acquisition speed to 300 Hz realistically. This obstructs the use of mobile eye trackers to perform, e.g., low latency predictive rendering, or to study quick and subtle eye motions like microsaccades using head-mounted devices in the wild. Here, we propose a hybrid frame-event-based near-eye gaze tracking system offering update rates beyond 10,000 Hz with an accuracy that matches that of high-end desktop-mounted commercial trackers when evaluated in the same conditions. Our system builds on emerging event cameras that simultaneously acquire regularly sampled frames and adaptively sampled events. We develop an online 2D pupil fitting method that updates a parametric model every one or few events. Moreover, we propose a polynomial regressor for estimating the point of gaze from the parametric pupil model in real time. Using the first event-based gaze dataset we demonstrate that our system achieves accuracies of 0.45 degrees-1.75 degrees for fields of view from 45 degrees to 98 degrees. With this technology, we hope to enable a new generation of ultra-low-latency gaze-contingent rendering and display techniques for virtual and augmented reality.