Enhancing Lipstick Try-On with Augmented Reality and Color Prediction Model

PubDate: April 2018

Teams: Srinakharinwirot University

Writers: Nuwee WiwatwattanaEmail authorSirikarn ChareonnivassakulNoppon MaleerutmongkolChavin Charoenvitvorakul

PDF: Enhancing Lipstick Try-On with Augmented Reality and Color Prediction Model

Abstract

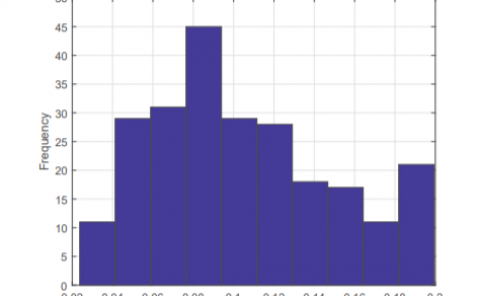

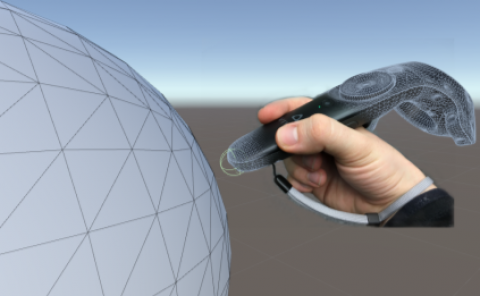

One of the important tasks in purchasing cosmetics is the selection process. Swatching is the best way in shade-matching the look and feel of cosmetics. However, swatching the lipstick color on skins is far from being a good representation of the lips color. This paper aims to develop a virtual lipstick try-on application based on augmented reality and color prediction model. The goal of the color prediction model is to predict the RGB of the worn lips color given an undertone color of the lips and a lipstick shade. We have studied the performance of several learning models including simple and multiple linear regression, reduced-error pruning decision tree, M5P model tree, support vector regression, stacking technique, and random forests. We find that ensemble methods work best. However, since ensemble methods win only a small margin, our application is implemented with a simpler algorithm that is faster to train and to test, the M5P. The detection and tracking of lips are implemented using the OpenFace toolkit’s facial landmark detection sub-module. Measuring the prediction accuracy with MAE and RMSE, we have demonstrated that our approach that predicts worn lips colors performs better than without the prediction. Lipstick shades that resemble human skins have been shown to give more accurate results than dark shades or light pink shades.