ManipNet: Neural Manipulation Synthesis with a Hand-Object Spatial Representation

PubDate: August 9, 2021

Teams: The University of Edinburgh;Facebook Reality Labs;The University of Hong Kong

Writers: He Zhang, Yuting Ye, Takaaki Shiratori, Taku Komura

PDF: ManipNet: Neural Manipulation Synthesis with a Hand-Object Spatial Representation

Abstract

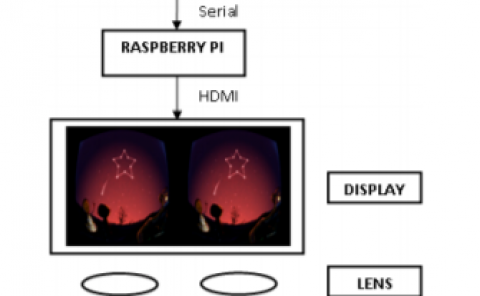

Natural hand manipulations exhibit complex finger maneuvers adaptive to object shapes and the tasks at hand. Learning dexterous manipulation from data in a brute force way would require a prohibitive amount of examples to effectively cover the combinatorial space of 3D shapes and activities. In this paper, we propose a hand-object spatial representation that can achieve generalization from limited data. Our representation combines the global object shape as voxel occupancies with local geometric details as samples of closest distances. This representation is used by a neural network to regress finger motions from input trajectories of wrists and objects. Specifically, we provide the network with the current finger pose, past and future trajectories, and the spatial representations extracted from these trajectories. The network then predicts a new finger pose for the next frame as an autoregressive model. With a carefully chosen hand-centric coordinate system, we can handle single-handed and two-handed motions in a unified framework. Learning from a small number of primitive shapes and kitchenware objects, the network is able to synthesize a variety of finger gaits for grasping, in-hand manipulation, and bimanual object handling on a rich set of novel shapes and functional tasks. We also demonstrate a live demo of manipulating virtual objects in real-time using a simple physical prop. Our system is useful for offline animation or real-time applications forgiving to a small delay.