OpenRooms: An Open Framework for Photorealistic Indoor Scene Datasets

PubDate: June 2021

Teams: Zhengqin Li1 Ting-Wei Yu1 Shen Sang1 Sarah Wang1 Meng Song1 Yuhan Liu1 Yu-Ying Yeh1Rui Zhu1 Nitesh Gundavarapu1Jia Shi1 Sai Bi1 Hong-Xing Yu1 Zexiang Xu2Kalyan Sunkavalli2 Milos Ha ˇ san ˇ2 Ravi Ramamoorthi1 Manmohan Chandraker1

Writers: 1UC San Diego 2Adobe Research

PDF: OpenRooms: An Open Framework for Photorealistic Indoor Scene Datasets

Abstract

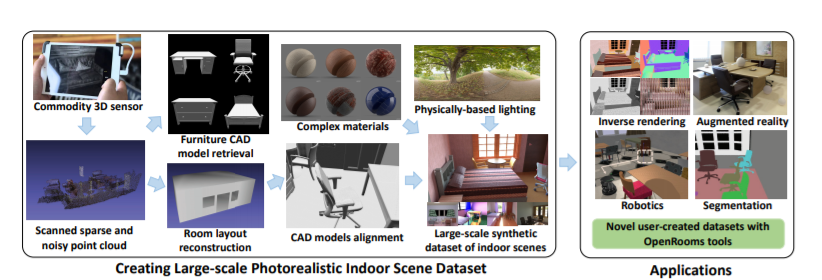

We propose a novel framework for creating large-scale photorealistic datasets of indoor scenes, with ground truth geometry, material, lighting and semantics. Our goal is to make the dataset creation process widely accessible, transforming scans into photorealistic datasets with high-quality ground truth for appearance, layout, semantic labels, high quality spatially-varying BRDF and complex lighting, including direct, indirect and visibility components. This enables important applications in inverse rendering, scene understanding and robotics. We show that deep networks trained on the proposed dataset achieve competitive performance for shape, material and lighting estimation on real images, enabling photorealistic augmented reality applications, such as object insertion and material editing. We also show our semantic labels may be used for segmentation and multi-task learning. Finally, we demonstrate that our framework may also be integrated with physics engines, to create virtual robotics environments with unique ground truth such as friction coefficients and correspondence to real scenes. The dataset and all the tools to create such datasets will be made publicly available.