Scene-aware Generative Network for Human Motion Synthesis

PubDate: May 2021

Teams: The Chinese University of Hong Kong;Nanyang Technological University;3Centre of Perceptual and Interactive Intelligence

Writers: Jingbo Wang, Sijie Yan, Bo Dai, Dahua LIn

PDF: Scene-aware Generative Network for Human Motion Synthesis

Abstract

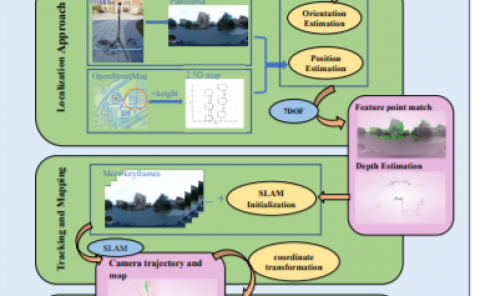

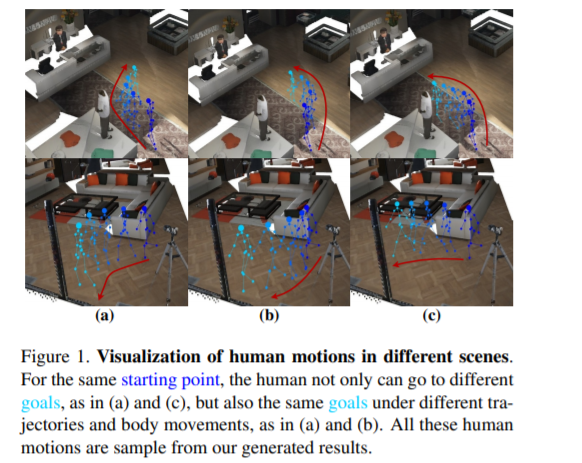

We revisit human motion synthesis, a task useful in various real world applications, in this paper. Whereas a number of methods have been developed previously for this task, they are often limited in two aspects: focusing on the poses while leaving the location movement behind, and ignoring the impact of the environment on the human motion. In this paper, we propose a new framework, with the interaction between the scene and the human motion taken into account. Considering the uncertainty of human motion, we formulate this task as a generative task, whose objective is to generate plausible human motion conditioned on both the scene and the human initial position. This framework factorizes the distribution of human motions into a distribution of movement trajectories conditioned on scenes and that of body pose dynamics conditioned on both scenes and trajectories. We further derive a GAN based learning approach, with discriminators to enforce the compatibility between the human motion and the contextual scene as well as the 3D to 2D projection constraints. We assess the effectiveness of the proposed method on two challenging datasets, which cover both synthetic and real world environments.