Ego-Exo: Transferring Visual Representationsfrom Third-person to First-person Videos

PubDate: June 2021

Teams: Facebook AI Research;UT Austin

Writers: Yanghao Li1 Tushar Nagarajan1,2 Bo Xiong1 Kristen Grauman

PDF: Ego-Exo: Transferring Visual Representationsfrom Third-person to First-person Videos

Abstract

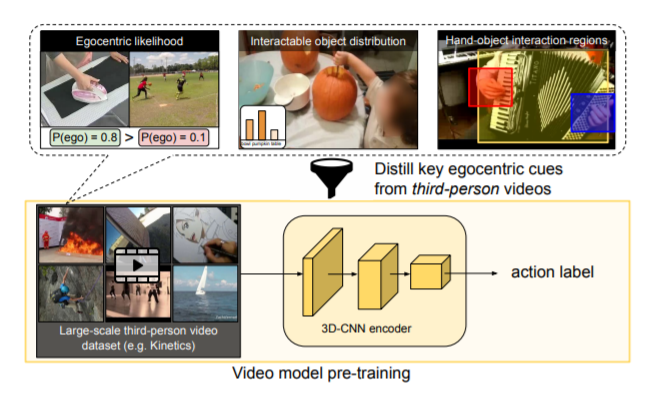

We introduce an approach for pre-training egocentric video models using large-scale third-person video datasets. Learning from purely egocentric data is limited by low dataset scale and diversity, while using purely exocentric (third-person) data introduces a large domain mismatch. Our idea is to discover latent signals in third-person video that are predictive of key egocentric specific properties. Incorporating these signals as knowledge distillation losses during pre-training results in models that benefit from both the scale and diversity of third-person video data, as well

as representations that capture salient egocentric properties. Our experiments show that our “Ego Exo” framework can be seamlessly integrated into standard video models; it outperforms all baselines when fine-tuned for egocentric activity recognition, achieving state-of-the-art results on Charades-Ego and EPIC-Kitchens-100