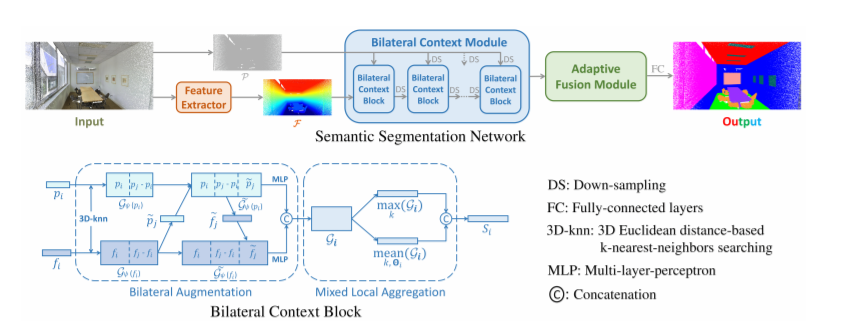

Semantic Segmentation for Real Point Cloud Scenes via Bilateral Augmentation and Adaptive Fusion

PubDate: Apr 2021

Teams: Australian National University, Data61-CSIRO, Australia

Writers: Shi Qiu, Saeed Anwar, Nick Barnes

PDF: Semantic Segmentation for Real Point Cloud Scenes via Bilateral Augmentation and Adaptive Fusion

Abstract

Given the prominence of current 3D sensors, a fine-grained analysis on the basic point cloud data is worthy of further investigation. Particularly, real point cloud scenes can intuitively capture complex surroundings in the real world, but due to 3D data’s raw nature, it is very challenging for machine perception. In this work, we concentrate on the essential visual task, semantic segmentation, for large-scale point cloud data collected in reality. On the one hand, to reduce the ambiguity in nearby points, we augment their local context by fully utilizing both geometric and semantic features in a bilateral structure. On the other hand, we comprehensively interpret the distinctness of the points from multiple resolutions and represent the feature map following an adaptive fusion method at point-level for accurate semantic segmentation. Further, we provide specific ablation studies and intuitive visualizations to validate our key modules. By comparing with state-of-the-art networks on three different benchmarks, we demonstrate the effectiveness of our network.