VisualVoice: Audio-Visual Speech Separation with Cross-Modal Consistency

PubDate: Apr 2021

Teams: The University of Texas at Austin;Stanford University;Facebook AI Research

Writers: Ruohan Gao, Kristen Grauman

PDF: VisualVoice: Audio-Visual Speech Separation with Cross-Modal Consistency

Abstract

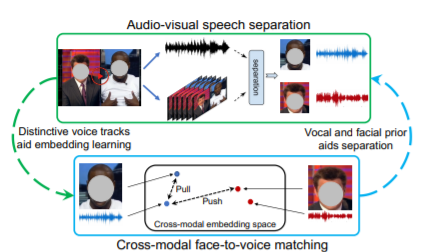

We introduce a new approach for audio-visual speech separation. Given a video, the goal is to extract the speech associated with a face in spite of simultaneous background sounds and/or other human speakers. Whereas existing methods focus on learning the alignment between the speaker’s lip movements and the sounds they generate, we propose to leverage the speaker’s face appearance as an additional prior to isolate the corresponding vocal qualities they are likely to produce. Our approach jointly learns audio-visual speech separation and cross-modal speaker embeddings from unlabeled video. It yields state-of-the-art results on five benchmark datasets for audio-visual speech separation and enhancement, and generalizes well to challenging real-world videos of diverse scenarios. Our video results and code: this http URL.