OpenNEEDS: A Dataset of Gaze, Head, Hand, and Scene Signals During Exploration in Open-ended VR Environments

PubDate: May 21, 2021

Teams: University of Nevada;Facebook Reality Labs

Writers: Kara J. Emery, Marina Zannoli, Lei Xiao, James Warren, Sachin S. Talathi

Abstract

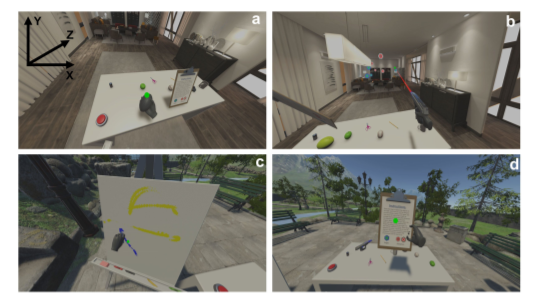

We present OpenNEEDS, the first large-scale, high frame rate, comprehensive, and open-source dataset of Non-Eye (head, hand, and scene) and Eye (3D gaze vectors) data captured for 44 participants as they freely explored two virtual environments with many potential tasks (i.e., reading, drawing, shooting, object manipulation, etc.). With this dataset, we aim to enable research on the relationship between head, hand, scene, and gaze spatiotemporal statistics and its applications to gaze estimation. To demonstrate the power of OpenNEEDS, we show that gaze estimation models using individual non-eye sensors and an early fusion model combining all non-eye sensors outperform all baseline gaze estimation models considered, suggesting the possibility of considering non-eye sensors in the design of robust eye trackers. We anticipate that this dataset will support research progress in many areas and applications such as gaze estimation and prediction, sensor fusion, human-computer interaction, intent prediction, perceptuo-motor control, and machine learning.