Virtual View and Video Synthesis Without Camera Calibration or Depth Map

PubDate: February 2021

Teams: Lanzhou University

Writers: Hai Xu; Yi Wan

PDF: Virtual View and Video Synthesis Without Camera Calibration or Depth Map

Abstract

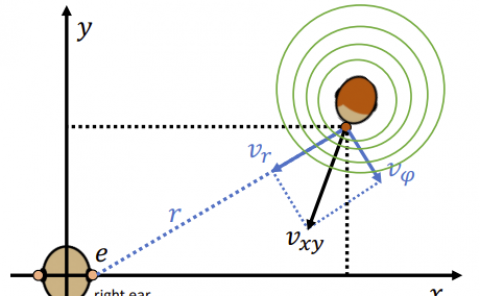

This paper proposes a method for synthesizing virtual views based on two real views from different perspectives. This method is also readily applicable to video synthesis. This method can quickly generate new virtual views and a smooth video a view transition without camera calibration or depth map. In this method, we first extract the corresponding feature points from the real views by using the SFT algorithm. Secondly, we build a virtual multi-camera model. Then we calculate the coordinates of feature points in each virtual perspective, and project the real views onto this virtual perspective. Finally, the virtual views are synthesized. This method can be applied to most real scenes such as indoor and street scenes.