Towards Retina-Quality VR Video Streaming: 15ms Could Save You 80% of Your Bandwidth

PubDate: Sep 2021

Teams: Stanford Universit

Writers: Luke Hsiao, Brooke Krajancich, Philip Levis, Gordon Wetzstein, Keith Winstein

PDF: Towards Retina-Quality VR Video Streaming: 15ms Could Save You 80% of Your Bandwidth

Abstract

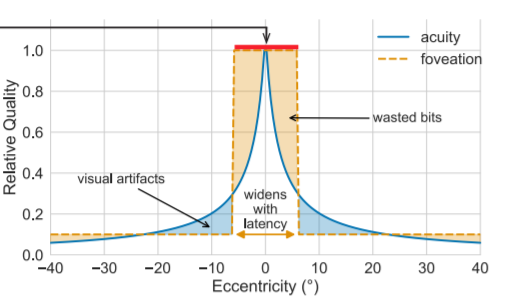

Virtual reality systems today cannot yet stream immersive, retina-quality virtual reality video over a network. One of the greatest challenges to this goal is the sheer data rates required to transmit retina-quality video frames at high resolutions and frame rates. Recent work has leveraged the decay of visual acuity in human perception in novel gaze-contingent video compression techniques. In this paper, we show that reducing the motion-to-photon latency of a system itself is a key method for improving the compression ratio of gaze-contingent compression. Our key finding is that a client and streaming server system with sub-15ms latency can achieve 5x better compression than traditional techniques while also using simpler software algorithms than previous work.