Instant Reality: Gaze-Contingent Perceptual Optimization for 3D Virtual Reality Streaming

PubDate: Jan 2022

Teams: New York University;Adobe Research

Writers: Shaoyu Chen, Budmonde Duinkharjav, Xin Sun, Li-Yi Wei, Stefano Petrangeli, Jose Echevarria, Claudio Silva, Qi Sun

PDF: Instant Reality: Gaze-Contingent Perceptual Optimization for 3D Virtual Reality Streaming

Abstract

Media streaming has been adopted for a variety of applications such as entertainment, visualization, and design. Unlike video/audio streaming where the content is usually consumed sequentially, 3D applications such as gaming require streaming 3D assets to facilitate client-side interactions such as object manipulation and viewpoint movement. Compared to audio and video streaming, 3D streaming often requires larger data sizes and yet lower latency to ensure sufficient rendering quality, resolution, and latency for perceptual comfort. Thus, streaming 3D assets can be even more challenging than streaming audios/videos, and existing solutions often suffer from long loading time or limited quality.

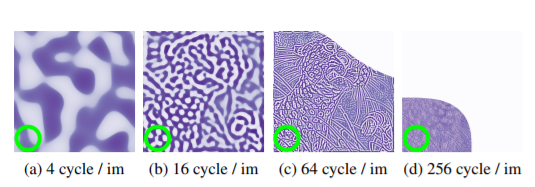

To address this critical and timely issue, we propose a perceptually-optimized progressive 3D streaming method for spatial quality and temporal consistency in immersive interactions. Based on the human visual mechanisms in the frequency domain, our model selects and schedules the streaming dataset for optimal spatial-temporal quality. We also train a neural network for our model to accelerate this decision process for real-time client-server applications. We evaluate our method via subjective studies and objective analysis under varying network conditions (from 3G to 5G) and client devices (HMD and traditional displays), and demonstrate better visual quality and temporal consistency than alternative solutions.