ControllerPose: Inside-Out Body Capture with VR Controller Cameras

PubDate: April 2022

Teams: Carnegie Mellon University

Writers: Karan Ahuja, Vivian Shen, Cathy Fang, Nathan Riopelle, Andy Kong and Chris Harrison

PDF: ControllerPose: Inside-Out Body Capture with VR Controller Cameras

Project: ControllerPose: Inside-Out Body Capture with VR Controller Cameras

Abstract

Today’s virtual reality (VR) systems generally do not capture lower body pose – looking down in most VR experiences, one does not see their virtual legs, but rather an empty space with sometimes a diffuse shadow, reducing immersion and embodiment. This is because most contemporary VR systems only capture the motion of a user’s head and hands, using IMUs in the headset and controllers, respectively. Some systems are able to capture other joints, such as the legs, by using accessory sensors, either worn or placed in the environment. The latter systems are comparably rare as the additional hardware cost and extra inconvenience of setup has been a major deterrent for consumer adoption.

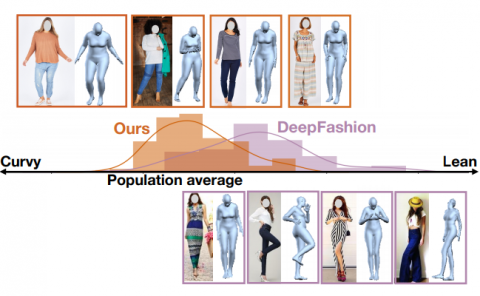

A more practical approach is to use cameras in the headset, though these innately have a very oblique view of the user, and line-of-sight to the lower body can be blocked by a user’s clothing or body (e.g., stomach, breasts, hands). To increase user comfort, headset designs are becoming thinner, which reduces rotational inertia and torque on the head from the weight of the headset. Unfortunately, this will slowly preclude the ability to perform robust pose tracking with headset-borne cameras.

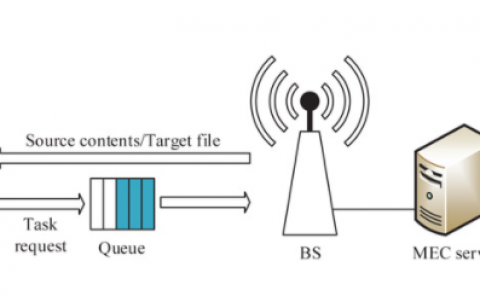

In this research, we consider an alternative and practical method for capturing user body pose: integrating cameras into VR controllers, where batteries, computation and wireless communication already exist. In a small motion capture study we ran, we found that the hands operate in front of the body a majority of the time (68.3%). Thus, we can opportunistically capture superior views of the body for digitization. In other cases, the hands are either too close or resting beside the body – in these cases, pose tracking will fail or would have to rely on e.g., inverse kinematics using available IMU data. However, the full-body tracking offered in many instances opens new and interesting leg-centric interactive experiences. For example, users can now stomp, lean, squat, lunge, balance and perform many other leg-driven interactions in VR (e.g., kicking a soccer ball). We built a series of functional demo applications incorporating these types of motions, illustrating the potential and feasibility of our approach. Our system can track full-body pose with a mean 3D joint error of 6.98 cm.