Viewing Behavior Supported Visual Saliency Predictor for 360 Degree Videos

PubDate: November 2021

Teams: Shanghai Jiao Tong University

Writers: Yucheng Zhu; Guangtao Zhai; Yiwei Yang; Huiyu Duan; Xiongkuo Min; Xiaokang Yang

PDF: Viewing Behavior Supported Visual Saliency Predictor for 360 Degree Videos

Abstract

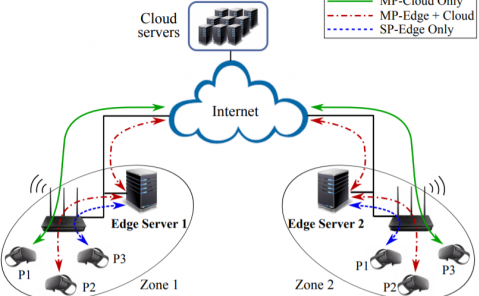

In virtual reality (VR), correct and precise estimations of user’s visual fixations and head movements can enhance the quality of experience by allocating more computation resources for analysing and rendering on the areas of interest. However, there is insufficient research about understanding the visual exploration of users when modeling VR visual attention. To bridge the gap between the saliency prediction for traditional 2D content and omnidirectional content, we construct the visual attention dataset and propose the visual saliency prediction framework for panoramic videos. Around the instantaneous viewing behavior, we propose a traditional method to adapt 2D saliency models and design a CNN-based model to better predict visual saliency. In the proposed traditional model, mechanism of visual attention and viewing behaviors are considered in the computation of edge weights on graphs which are interpreted as Markov chains. The fraction of the visual attention that is diverted to each high-clarity vision (HCV) area is estimated through equilibrium distribution of this chain. We also propose the Graph-Based CNN model. The RGB channel and optical flow form the spatial-temporal units of HCVs, from which node feature vectors are extracted. Graph convolution is used to learn the mutual information between node feature vectors of HCVs and retain geometric information. Then feature vectors are aligned according to geometry structure of equirectangular format, and the feature decoder maps the aligned feature maps to the data distribution. We also construct the dynamic omnidirectional monocular (DOM) saliency dataset with 64 diverse videos evaluated by 28 people. The subjective results show that the instantaneous viewing behavior is important in the VR experience. Extensive experiments are conducted on the dataset and the results demonstrate the effectiveness of the proposed framework. The dataset will be released to facilitate the future studies related to visual saliency prediction for 360-degree contents.