Deep Billboards towards Lossless Real2Sim in Virtual Reality

PubDate: Aug 2022

Teams: University of Tsukuba;The University of Tokyo;Google Brain

Writers: Naruya Kondo, So Kuroki, Ryosuke Hyakuta, Yutaka Matsuo, Shixiang Shane Gu, Yoichi Ochiai

PDF: Deep Billboards towards Lossless Real2Sim in Virtual Reality

Abstract

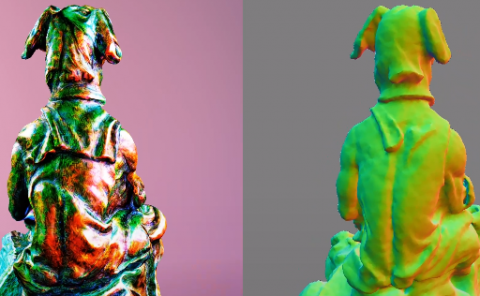

An aspirational goal for virtual reality (VR) is to bring in a rich diversity of real world objects losslessly. Existing VR applications often convert objects into explicit 3D models with meshes or point clouds, which allow fast interactive rendering but also severely limit its quality and the types of supported objects, fundamentally upper-bounding the “realism” of VR. Inspired by the classic “billboards” technique in gaming, we develop Deep Billboards that model 3D objects implicitly using neural networks, where only 2D image is rendered at a time based on the user’s viewing direction. Our system, connecting a commercial VR headset with a server running neural rendering, allows real-time high-resolution simulation of detailed rigid objects, hairy objects, actuated dynamic objects and more in an interactive VR world, drastically narrowing the existing real-to-simulation (real2sim) gap. Additionally, we augment Deep Billboards with physical interaction capability, adapting classic billboards from screen-based games to immersive VR. At our pavilion, the visitors can use our off-the-shelf setup for quickly capturing their favorite objects, and within minutes, experience them in an immersive and interactive VR world with minimal loss of reality. Our project page: this https URL