MOTSLAM: MOT-assisted monocular dynamic SLAM using single-view depth estimation

PubDate: Oct 2022

Teams: Kyushu University;Nara Institute of Science and Technology;Fukuoka University;The University of Tokyo

Writers: Hanwei Zhang, Hideaki Uchiyama, Shintaro Ono, Hiroshi Kawasaki

PDF: MOTSLAM: MOT-assisted monocular dynamic SLAM using single-view depth estimation

Abstract

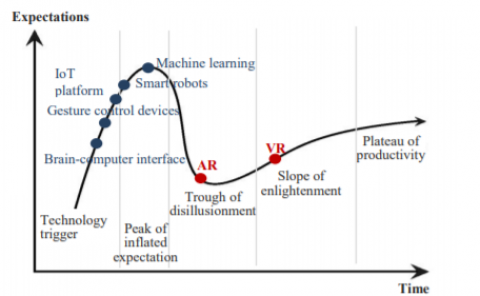

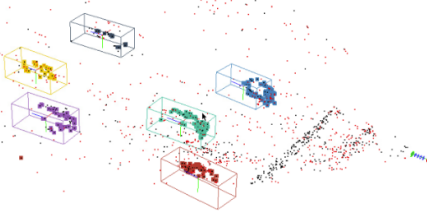

Visual SLAM systems targeting static scenes have been developed with satisfactory accuracy and robustness. Dynamic 3D object tracking has then become a significant capability in visual SLAM with the requirement of understanding dynamic surroundings in various scenarios including autonomous driving, augmented and virtual reality. However, performing dynamic SLAM solely with monocular images remains a challenging problem due to the difficulty of associating dynamic features and estimating their positions. In this paper, we present MOTSLAM, a dynamic visual SLAM system with the monocular configuration that tracks both poses and bounding boxes of dynamic objects. MOTSLAM first performs multiple object tracking (MOT) with associated both 2D and 3D bounding box detection to create initial 3D objects. Then, neural-network-based monocular depth estimation is applied to fetch the depth of dynamic features. Finally, camera poses, object poses, and both static, as well as dynamic map points, are jointly optimized using a novel bundle adjustment. Our experiments on the KITTI dataset demonstrate that our system has reached best performance on both camera ego-motion and object tracking on monocular dynamic SLAM.