Deep Learning Classification of Touch Gestures Using Distributed Normal and Shear Force

PubDate: Oct 2022

Teams: Stanford University;Reality Labs Research

Writers: Hojung Choi, Dane Brouwer, Michael A. Lin, Kyle T. Yoshida, Carine Rognon, Benjamin Stephens-Fripp, Allison M. Okamura, Mark R. Cutkosky

PDF: Deep Learning Classification of Touch Gestures Using Distributed Normal and Shear Force

Abstract

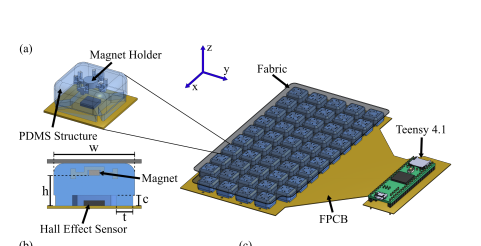

When humans socially interact with another agent (e.g., human, pet, or robot) through touch, they do so by applying varying amounts of force with different directions, locations, contact areas, and durations. While previous work on touch gesture recognition has focused on the spatio-temporal distribution of normal forces, we hypothesize that the addition of shear forces will permit more reliable classification. We present a soft, flexible skin with an array of tri-axial tactile sensors for the arm of a person or robot. We use it to collect data on 13 touch gesture classes through user studies and train a Convolutional Neural Network (CNN) to learn spatio-temporal features from the recorded data. The network achieved a recognition accuracy of 74% with normal and shear data, compared to 66% using only normal force data. Adding distributed shear data improved classification accuracy for 11 out of 13 touch gesture classes.