Unpaired Translation from Semantic Label Maps to Images by Leveraging Domain-Specific Simulations

PubDate: Feb 2023

Teams: ETH Zurich;Uppsala University

Writers: Lin Zhang, Tiziano Portenier, Orcun Goksel

PDF: Unpaired Translation from Semantic Label Maps to Images by Leveraging Domain-Specific Simulations

Abstract

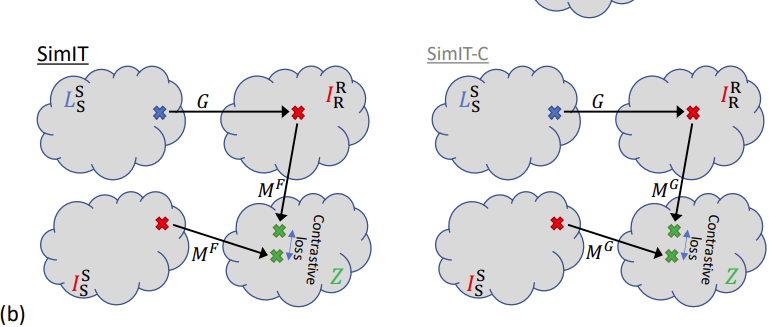

Photorealistic image generation from simulated label maps are necessitated in several contexts, such as for medical training in virtual reality. With conventional deep learning methods, this task requires images that are paired with semantic annotations, which typically are unavailable. We introduce a contrastive learning framework for generating photorealistic images from simulated label maps, by learning from unpaired sets of both. Due to potentially large scene differences between real images and label maps, existing unpaired image translation methods lead to artifacts of scene modification in synthesized images. We utilize simulated images as surrogate targets for a contrastive loss, while ensuring consistency by utilizing features from a reverse translation network. Our method enables bidirectional label-image translations, which is demonstrated in a variety of scenarios and datasets, including laparoscopy, ultrasound, and driving scenes. By comparing with state-of-the-art unpaired translation methods, our proposed method is shown to generate realistic and scene-accurate translations.