DreamPaint: Few-Shot Inpainting of E-Commerce Items for Virtual Try-On without 3D Modeling

PubDate: May 2023

Teams: University of Washington;Amazon

Writers: Mehmet Saygin Seyfioglu, Karim Bouyarmane, Suren Kumar, Amir Tavanaei, Ismail B. Tutar

PDF: DreamPaint: Few-Shot Inpainting of E-Commerce Items for Virtual Try-On without 3D Modeling

Abstract

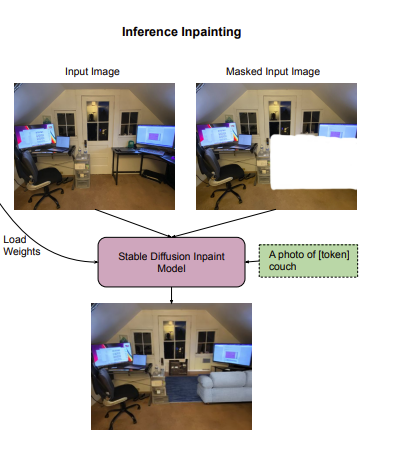

We introduce DreamPaint, a framework to intelligently inpaint any e-commerce product on any user-provided context image. The context image can be, for example, the user’s own image for virtual try-on of clothes from the e-commerce catalog on themselves, the user’s room image for virtual try-on of a piece of furniture from the e-commerce catalog in their room, etc. As opposed to previous augmented-reality (AR)-based virtual try-on methods, DreamPaint does not use, nor does it require, 3D modeling of neither the e-commerce product nor the user context. Instead, it directly uses 2D images of the product as available in product catalog database, and a 2D picture of the context, for example taken from the user’s phone camera. The method relies on few-shot fine tuning a pre-trained diffusion model with the masked latents (e.g., Masked DreamBooth) of the catalog images per item, whose weights are then loaded on a pre-trained inpainting module that is capable of preserving the characteristics of the context image. DreamPaint allows to preserve both the product image and the context (environment/user) image without requiring text guidance to describe the missing part (product/context). DreamPaint also allows to intelligently infer the best 3D angle of the product to place at the desired location on the user context, even if that angle was previously unseen in the product’s reference 2D images. We compare our results against both text-guided and image-guided inpainting modules and show that DreamPaint yields superior performance in both subjective human study and quantitative metrics.