A Mechanistic Transform Model for Synthesizing Eye Movement Data with Improved Realism

PubDate: June 2023

Teams: Texas State University

Writers: Henry Griffith, Samantha Aziz, Dillon J Lohr, Oleg Komogortsev

PDF: A Mechanistic Transform Model for Synthesizing Eye Movement Data with Improved Realism

Abstract

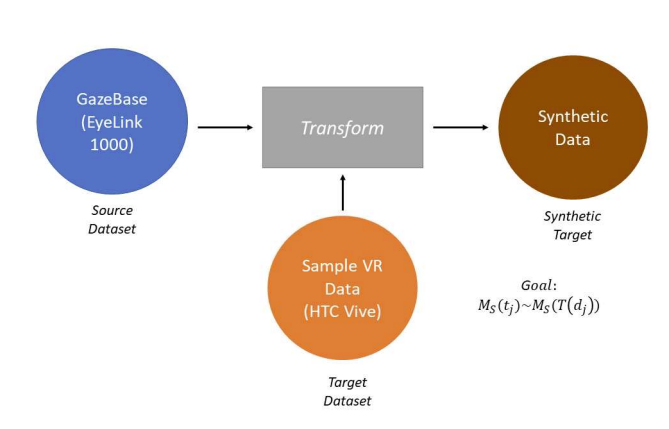

This manuscript demonstrates an improved model-based approach for synthetic degradation of previously captured eye movement signals. Signals recorded on a high-quality eye tracking sensor are transformed such that their resulting eye tracking signal quality is similar to recordings captured on a low-quality target device. The proposed model improves the realism of the degraded signals versus prior approaches by introducing a mechanism for degrading spatial accuracy and temporal precision. Moreover, a percentile-matching technique is demonstrated for mimicking the relative distributional structure of the signal quality characteristics of the target data set. The model is demonstrated to improve realism on a per-feature and per-recording basis using data from an EyeLink 1000 eye tracker and an SMI eye tracker embedded within a virtual reality platform. The model improves the median classification accuracy performance metric by 35.7% versus the benchmark model towards the ideal metric of 50%. This paper also expands the literature by providing an application-agnostic realism assessment workflow for synthetically generated eye movement signals.