A neuro-symbolic approach for multimodal reference expression comprehension

PubDate: June 2023

Teams: The University of Tokyo; Honda R&D Co.,Ltd

Writers: Aman Jain, Anirudh Reddy Kondapally, Kentaro Yamada, Hitomi Yanaka

PDF: A neuro-symbolic approach for multimodal reference expression comprehension

Abstract

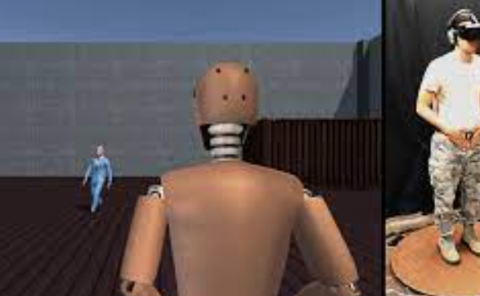

Human-Machine Interaction (HMI) systems have gained huge interest in recent years, with reference expression comprehension being one of the main challenges. Traditionally human-machine interaction has been mostly limited to speech and visual modalities. However, to allow for more freedom in interaction, recent works have proposed the integration of additional modalities, such as gestures in HMI systems. We consider such an HMI system with pointing gestures and construct a table-top object picking scenario inside a simulated virtual reality (VR) environment to collect data. Previous works for such a task have used deep neural networks to classify the referred object, which lacks transparency. In this work, we propose an interpretable and compositional model, crucial to building robust HMI systems for real-world application, based on a neuro-symbolic approach to tackle this task. Finally we also show the generalizability of our model on unseen environments and report the results.