From Discrete Tokens to High-Fidelity Audio Using Multi-Band Diffusion

PubDate: June 2023

Teams: Robin San Roman♢,♠ Yossi Adi♢,♣ Antoine Deleforge♠ Romain Serizel♠

Gabriel Synnaeve♢ Alexandre Defossez♢

Writers: Meta,Universite de Lorraine,Hebrew University of Jerusalem.

PDF: From Discrete Tokens to High-Fidelity Audio Using Multi-Band Diffusion

Abstract

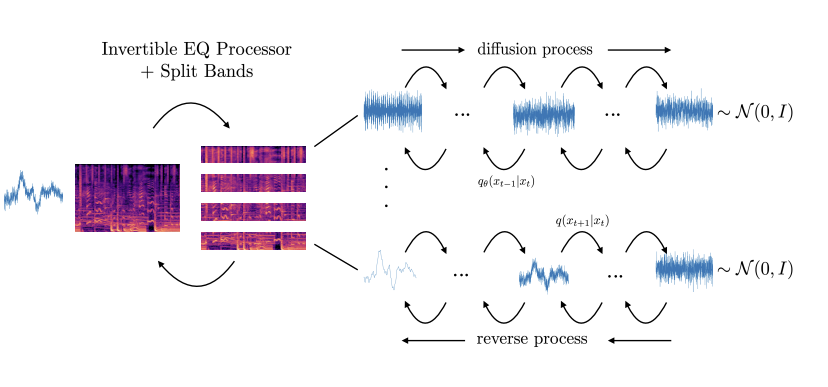

Deep generative models can generate high-fidelity audio conditioned on various types of representations (e.g., mel-spectrograms, Mel-frequency Cepstral Coefficients (MFCC)). Recently, such models have been used to synthesize audio waveforms conditioned on highly compressed representations. Although such methods produce impressive results, they are prone to generate audible artifacts when the conditioning is flawed or imperfect. An alternative modeling approach is to use diffusion models. However, these have mainly been used as speech vocoders (i.e., conditioned on mel-spectrograms) or generating relatively low sampling rate signals. In this work, we propose a high-fidelity multi-band diffusion-based framework that generates any type of audio modality (e.g., speech, music, environmental sounds) from low-bitrate discrete representations. At equal bit rate, the proposed approach outperforms state-of-the-art generative techniques in terms of perceptual quality. Training and, evaluation code, along with audio samples, are available on the facebookresearch/audiocraft github project.