Coordinate Transformer: Achieving Single-stage Multi-person Mesh Recovery from Videos

PubDate: Aug 2023

Teams: 1Shenzhen campus of Sun Yat-sen University 2Carnegie Mellon University3Samsung Research China – Beijing (SRC-B) 4UiT The Arctic University of Norway5Sun Yat-sen University 6Mohamed bin Zayed University of AI

Writers: Haoyuan Li, Haoye Dong, Hanchao Jia, Dong Huang, Michael C. Kampffmeyer, Liang Lin, Xiaodan Liang

PDF: Coordinate Transformer: Achieving Single-stage Multi-person Mesh Recovery from Videos

Abstract

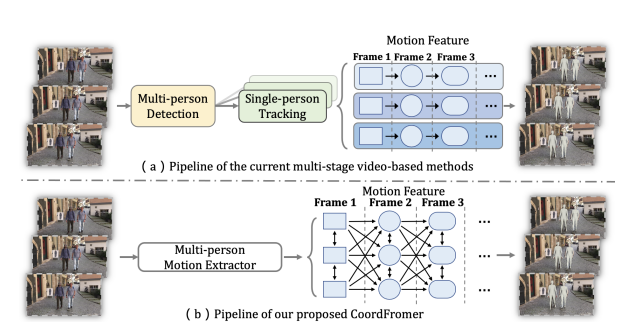

Multi-person 3D mesh recovery from videos is a critical first step towards automatic perception of group behavior in virtual reality, physical therapy and beyond. However, existing approaches rely on multi-stage paradigms, where the person detection and tracking stages are performed in a multi-person setting, while temporal dynamics are only modeled for one person at a time. Consequently, their performance is severely limited by the lack of inter-person interactions in the spatial-temporal mesh recovery, as well as by detection and tracking defects. To address these challenges, we propose the Coordinate transFormer (CoordFormer) that directly models multi-person spatial-temporal relations and simultaneously performs multi-mesh recovery in an end-to-end manner. Instead of partitioning the feature map into coarse-scale patch-wise tokens, CoordFormer leverages a novel Coordinate-Aware Attention to preserve pixel-level spatial-temporal coordinate information. Additionally, we propose a simple, yet effective Body Center Attention mechanism to fuse position information. Extensive experiments on the 3DPW dataset demonstrate that CoordFormer significantly improves the state-of-the-art, outperforming the previously best results by 4.2%, 8.8% and 4.7% according to the MPJPE, PAMPJPE, and PVE metrics, respectively, while being 40% faster than recent video-based approaches. The released code can be found at this https URL.