Virtual Augmented Reality for Atari Reinforcement Learning

PubDate: Oct 2023

Teams: University of Duisburg-Essen

Writers: Christian A. Schiller

PDF: Virtual Augmented Reality for Atari Reinforcement Learning

Abstract

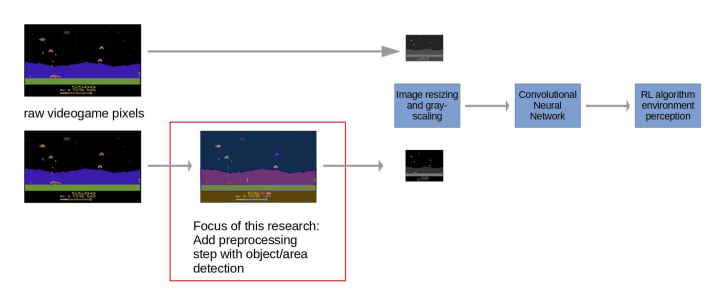

Reinforcement Learning (RL) has achieved significant milestones in the gaming domain, most notably Google DeepMind’s AlphaGo defeating human Go champion Ken Jie. This victory was also made possible through the Atari Learning Environment (ALE): The ALE has been foundational in RL research, facilitating significant RL algorithm developments such as AlphaGo and others. In current Atari video game RL research, RL agents’ perceptions of its environment is based on raw pixel data from the Atari video game screen with minimal image preprocessing. Contrarily, cutting-edge ML research, external to the Atari video game RL research domain, is focusing on enhancing image perception. A notable example is Meta Research’s “Segment Anything Model” (SAM), a foundation model capable of segmenting images without prior training (zero-shot). This paper addresses a novel methodical question: Can state-of-the-art image segmentation models such as SAM improve the performance of RL agents playing Atari video games? The results suggest that SAM can serve as a “virtual augmented reality” for the RL agent, boosting its Atari video game playing performance under certain conditions. Comparing RL agent performance results from raw and augmented pixel inputs provides insight into these conditions. Although this paper was limited by computational constraints, the findings show improved RL agent performance for augmented pixel inputs and can inform broader research agendas in the domain of “virtual augmented reality for video game playing RL agents”.