SplaTAM: Splat, Track & Map 3D Gaussians for Dense RGB-D SLAM

PubDate: Dec 2023

Teams:CMU;MIT

Writers: Nikhil Keetha1, Jay Karhade1, Krishna Murthy Jatavallabhula2, Gengshan Yang1,

Sebastian Scherer1, Deva Ramanan1, Jonathon Luiten1

PDF: SplaTAM: Splat, Track & Map 3D Gaussians for Dense RGB-D SLAM

Project: SplaTAM: Splat, Track & Map 3D Gaussians for Dense RGB-D SLAM

Abstract

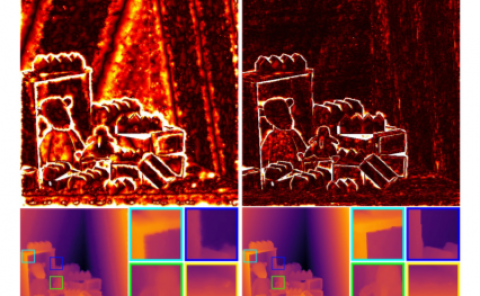

Dense simultaneous localization and mapping (SLAM) is pivotal for embodied scene understanding. Recent work has shown that 3D Gaussians enable high-quality reconstruction and real-time rendering of scenes using multiple posed cameras. In this light, we show for the first time that representing a scene by a 3D Gaussian Splatting radiance field can enable dense SLAM using a single unposed monocular RGB-D camera. Our method, SplaTAM, addresses the limitations of prior radiance field-based representations, including fast rendering and optimization, the ability to determine if areas have been previously mapped, and structured map expansion by adding more Gaussians. In particular, we employ an online tracking and mapping pipeline while tailoring it to specifically use an underlying Gaussian representation and silhouette-guided optimization via differentiable rendering. Extensive experiments on simulated and real-world data show that SplaTAM achieves up to 2 X state-of-the-art performance in camera pose estimation, map construction, and novel-view synthesis, demonstrating its superiority over existing approaches.