Analysis of Peripheral Vision and Vibrotactile Feedback During Proximal Search Tasks with Dynamic Virtual Entities in Augmented Reality

PubDate: October 2019

Teams: Dixie State University,University of Central Florida

Writers: Kendra Richards, Nikhil Mahalanobis, Kangsoo Kim, Ryan Schubert, Myungho Lee, Salam Daher, Nahal Norouzi, Jason Hochreiter, Gerd Bruder, Greg Welch

Abstract

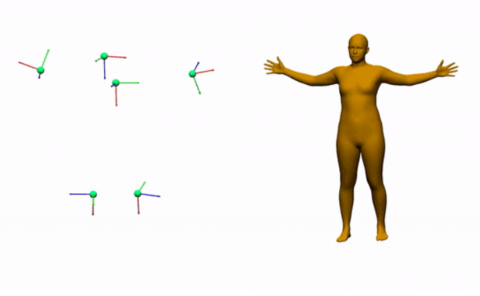

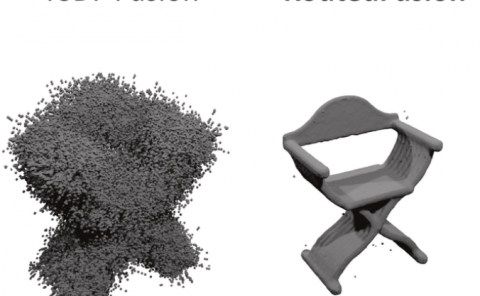

A primary goal of augmented reality (AR) is to seamlessly embed virtual content into a real environment. There are many factors that can affect the perceived physicality and co-presence of virtual entities, including the hardware capabilities, the fidelity of the virtual behaviors, and sensory feedback associated with the interactions. In this paper, we present a study investigating participants’ perceptions and behaviors during a time-limited search task in close proximity with virtual entities in AR. In particular, we analyze the effects of (i) visual conflicts in the periphery of an optical see-through head-mounted display, a Microsoft HoloLens, (ii) overall lighting in the physical environment, and (iii) multimodal feedback based on vibrotactile transducers mounted on a physical platform. Our results show significant benefits of vibrotactile feedback and reduced peripheral lighting for spatial and social presence, and engagement. We discuss implications of these effects for AR applications.