CMC: Few-shot Novel View Synthesis via Cross-view Multiplane Consistency

PubDate: Mar 2024

Teams: University of Science and Technology of China;Microsoft Research Asia

Writers: Hanxin Zhu, Tianyu He, Zhibo Chen

PDF: CMC: Few-shot Novel View Synthesis via Cross-view Multiplane Consistency

Abstract

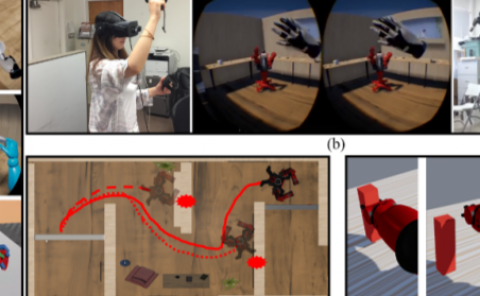

Neural Radiance Field (NeRF) has shown impressive results in novel view synthesis, particularly in Virtual Reality (VR) and Augmented Reality (AR), thanks to its ability to represent scenes continuously. However, when just a few input view images are available, NeRF tends to overfit the given views and thus make the estimated depths of pixels share almost the same value. Unlike previous methods that conduct regularization by introducing complex priors or additional supervisions, we propose a simple yet effective method that explicitly builds depth-aware consistency across input views to tackle this challenge. Our key insight is that by forcing the same spatial points to be sampled repeatedly in different input views, we are able to strengthen the interactions between views and therefore alleviate the overfitting problem. To achieve this, we build the neural networks on layered representations (\textit{i.e.}, multiplane images), and the sampling point can thus be resampled on multiple discrete planes. Furthermore, to regularize the unseen target views, we constrain the rendered colors and depths from different input views to be the same. Although simple, extensive experiments demonstrate that our proposed method can achieve better synthesis quality over state-of-the-art methods.