Real-time visual representations for mobile mixed reality remote collaboration

PubDate: December 2018

Teams: University of Canterbury,University of South Auckland,Northwestern Polytechnical University

Writers: Lei Gao;Huidong Bai;Weiping He;Mark Billinghurst;Robert W. Lindeman

PDF: Real-time visual representations for mobile mixed reality remote collaboration

Abstract

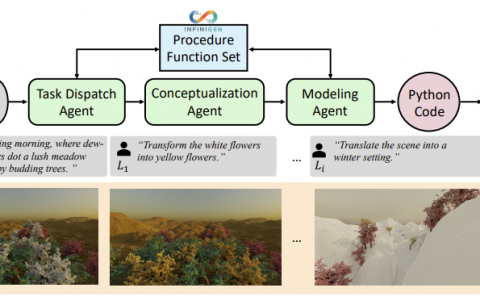

In this study we present a Mixed-Reality based mobile remote collaboration system that enables an expert providing real-time assistance over a physical distance. By using the Google ARCore position tracking, we can integrate the keyframes captured with one external depth sensor attached to the mobile phone as one single 3D point-cloud data set to present the local physical environment into the VR world. This captured local scene is then wirelessly streamed to the remote side for the expert to view while wearing a mobile VR headset (HTC VIVE Focus). In this case, the remote expert can immerse himself/herself in the VR scene and provide guidance just as sharing the same work environment with the local worker. In addition, the remote guidance is also streamed back to the local side as an AR cue overlaid on top of the local video see-through display. Our proposed mobile remote collaboration system supports a pair of participants performing as one remote expert guiding one local worker on some physical tasks in a more natural and efficient way in a large scale work space from a distance by simulating the face-to-face co-work experience using the Mixed-Reality technique.