An augmented reality interface to contextual information

Title: An augmented reality interface to contextual information

Teams: Microsoft

Writers: A. Ajanki M. Billinghurst H. Gamper T. Järvenpää M. Kandemir S. Kaski M. Koskela M. Kurimo J. Laaksonen K. Puolamäki T. Ruokolainen T. Tossavainen Hannes Gamper

Publication date: January 2011

Abstract

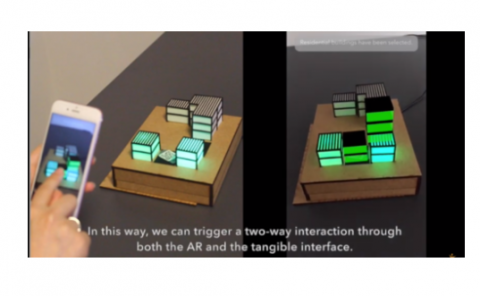

In this paper, we report on a prototype augmented reality (AR) platform for accessing abstract information in real-world pervasive computing environments. Using this platform, objects, people, and the environment serve as contextual channels to more information. The user’s interest with respect to the environment is inferred from eye movement patterns, speech, and other implicit feedback signals, and these data are used for information filtering. The results of proactive context-sensitive information retrieval are augmented onto the view of a handheld or head-mounted display or uttered as synthetic speech. The augmented information becomes part of the user’s context, and if the user shows interest in the AR content, the system detects this and provides progressively more information. In this paper, we describe the first use of the platform to develop a pilot application, Virtual Laboratory Guide, and early evaluation results of this application.