DepthLab: Real-time 3D Interaction with Depth Maps for Mobile Augmented Reality

PubDate: July, 2020

Teams: Google

Writers: Ruofei Du, Eric Turner, Maksym Dzitsiuk, Luca Prasso, Ivo Duarte, Jason Dourgarian, Joao Afonso, Jose Pascoal, Josh Gladstone, Nuno Cruces, Shahram Izadi, Adarsh Kowdle, Konstantine Tsotsos, David Kim

PDF: DepthLab: Real-time 3D Interaction with Depth Maps for Mobile Augmented Reality

Project: DepthLab: Real-time 3D Interaction with Depth Maps for Mobile Augmented Reality

Abstract

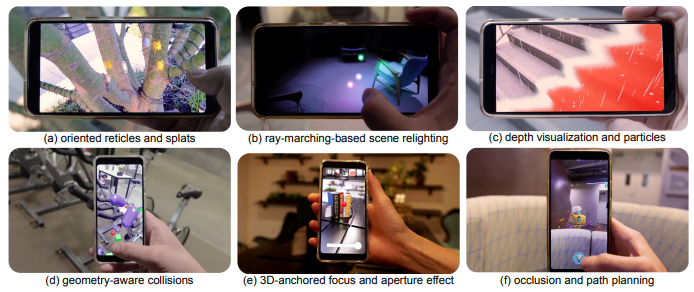

obile devices with passive depth sensing capabilities are ubiquitous, and recently active depth sensors have become available on some tablets and AR/VR devices. Although real-time depth data is accessible, its rich value to mainstream AR applications has been sorely under-explored. Adoption of depth-based UX has been impeded by the complexity of performing even simple operations with raw depth data, such as detecting intersections or constructing meshes. In this paper, we introduce DepthLab, a software library that encapsulates a variety of depth-based UI/UX paradigms, including geometry-aware rendering (occlusion, shadows), surface interaction behaviors (physics-based collisions, avatar path planning), and visual effects (relighting, 3D-anchored focus and aperture effects). We break down the usage of depth into localized depth, surface depth, and dense depth, and describe our real-time algorithms for interaction and rendering tasks. We present the design process, system, and components of DepthLab to streamline and centralize the development of interactive depth features. We have open-sourced our software at https://github.com/googlesamples/arcore-depth-lab to external developers, conducted performance evaluation, and discussed how DepthLab can accelerate the workflow of mobile AR designers and developers. With DepthLab we aim to help mobile developers to effortlessly integrate depth into their AR experiences and amplify the expression of their creative vision.