Virtual Borders: Accurate Definition of a Mobile Robot’s Workspace Using Augmented Reality

PubDate: Oct 2018

Teams: Bielefeld University of Applied Sciences; Otto-vonGuericke University Magdeburg

Writers: Dennis Sprute, Klaus Tönnies, Matthias König

PDF: Virtual Borders: Accurate Definition of a Mobile Robot’s Workspace Using Augmented Reality

Abstract

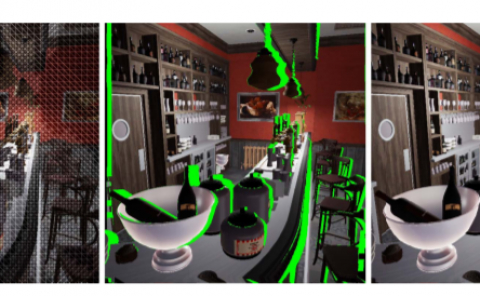

We address the problem of interactively controlling the workspace of a mobile robot to ensure a human-aware navigation. This is especially of relevance for non-expert users living in human-robot shared spaces, e.g. home environments, since they want to keep the control of their mobile robots, such as vacuum cleaning or companion robots. Therefore, we introduce virtual borders that are respected by a robot while performing its tasks. For this purpose, we employ a RGB-D Google Tango tablet as human-robot interface in combination with an augmented reality application to flexibly define virtual borders. We evaluated our system with 15 non-expert users concerning accuracy, teaching time and correctness and compared the results with other baseline methods based on visual markers and a laser pointer. The experimental results show that our method features an equally high accuracy while reducing the teaching time significantly compared to the baseline methods. This holds for different border lengths, shapes and variations in the teaching process. Finally, we demonstrated the correctness of the approach, i.e. the mobile robot changes its navigational behavior according to the user-defined virtual borders.