Visual to Sound: Generating Natural Sound for Videos in the Wild

PubDate: Jun 2018

Teams: University of North Carolina at Chapel Hill;Adobe Research

Writers: Yipin Zhou, Zhaowen Wang, Chen Fang, Trung Bui, Tamara L. Berg

PDF: Visual to Sound: Generating Natural Sound for Videos in the Wild

Abstract

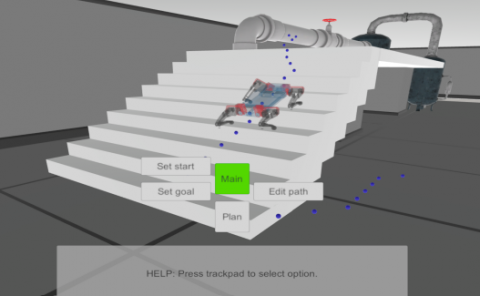

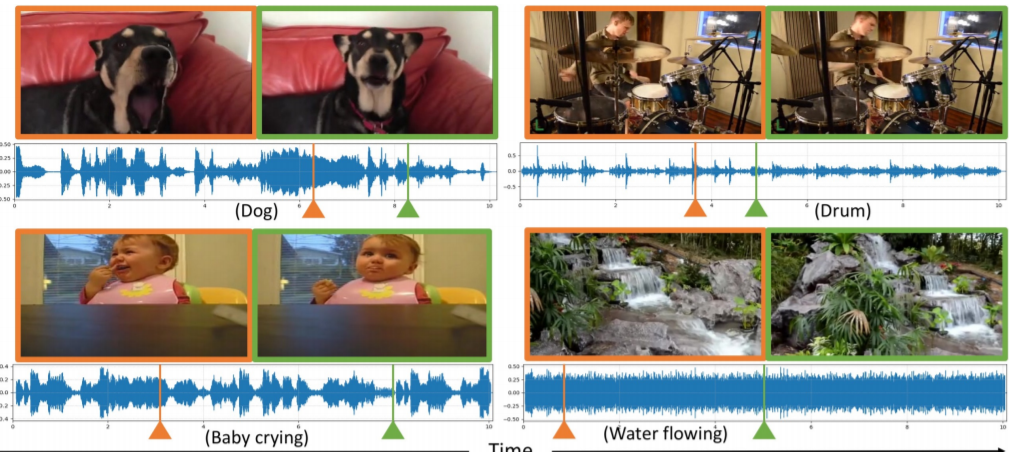

As two of the five traditional human senses (sight, hearing, taste, smell, and touch), vision and sound are basic sources through which humans understand the world. Often correlated during natural events, these two modalities combine to jointly affect human perception. In this paper, we pose the task of generating sound given visual input. Such capabilities could help enable applications in virtual reality (generating sound for virtual scenes automatically) or provide additional accessibility to images or videos for people with visual impairments. As a first step in this direction, we apply learning-based methods to generate raw waveform samples given input video frames. We evaluate our models on a dataset of videos containing a variety of sounds (such as ambient sounds and sounds from people/animals). Our experiments show that the generated sounds are fairly realistic and have good temporal synchronization with the visual inputs.