Real-time deep hair matting on mobile devices

PubDate: Jan 2018

Teams: ModiFace Inc;University of Toronto

Writers: Alex Levinshtein (1), Cheng Chang (1), Edmund Phung (1), Irina Kezele (1), Wenzhangzhi Guo (1), Parham Aarabi

PDF: Real-time deep hair matting on mobile devices

Abstract

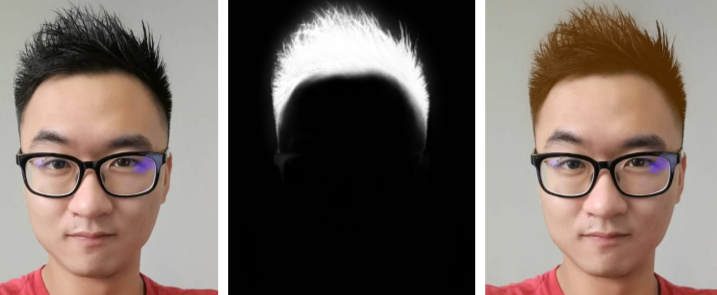

Augmented reality is an emerging technology in many application domains. Among them is the beauty industry, where live virtual try-on of beauty products is of great importance. In this paper, we address the problem of live hair color augmentation. To achieve this goal, hair needs to be segmented quickly and accurately. We show how a modified MobileNet CNN architecture can be used to segment the hair in real-time. Instead of training this network using large amounts of accurate segmentation data, which is difficult to obtain, we use crowd sourced hair segmentation data. While such data is much simpler to obtain, the segmentations there are noisy and coarse. Despite this, we show how our system can produce accurate and fine-detailed hair mattes, while running at over 30 fps on an iPad Pro tablet.