Peeking Behind Objects: Layered Depth Prediction from a Single Image

PubDate: Jul 2018

Teams: Technical University of Munich

Writers: Helisa Dhamo, Keisuke Tateno, Iro Laina, Nassir Navab, Federico Tombari

PDF: Peeking Behind Objects: Layered Depth Prediction from a Single Image

Abstract

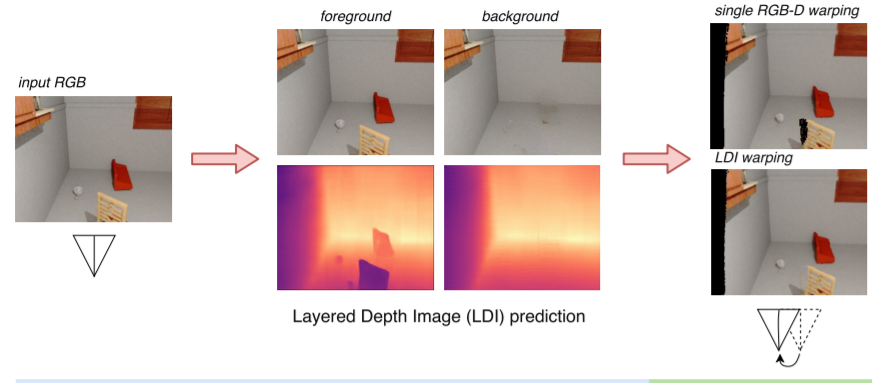

While conventional depth estimation can infer the geometry of a scene from a single RGB image, it fails to estimate scene regions that are occluded by foreground objects. This limits the use of depth prediction in augmented and virtual reality applications, that aim at scene exploration by synthesizing the scene from a different vantage point, or at diminished reality. To address this issue, we shift the focus from conventional depth map prediction to the regression of a specific data representation called Layered Depth Image (LDI), which contains information about the occluded regions in the reference frame and can fill in occlusion gaps in case of small view changes. We propose a novel approach based on Convolutional Neural Networks (CNNs) to jointly predict depth maps and foreground separation masks used to condition Generative Adversarial Networks (GANs) for hallucinating plausible color and depths in the initially occluded areas. We demonstrate the effectiveness of our approach for novel scene view synthesis from a single image.