VRKitchen: an Interactive 3D Virtual Environment for Task-oriented Learning

PubDate: Mar 2019

Teams: University of California

Writers: Xiaofeng Gao, Ran Gong, Tianmin Shu, Xu Xie, Shu Wang, Song-Chun Zhu

PDF: VRKitchen: an Interactive 3D Virtual Environment for Task-oriented Learning

Abstract

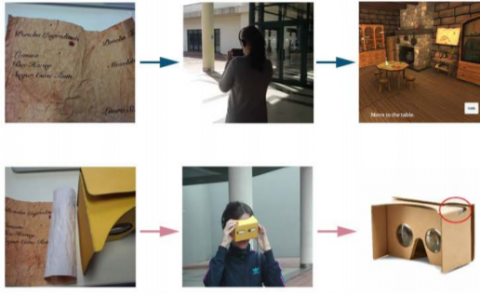

One of the main challenges of advancing task-oriented learning such as visual task planning and reinforcement learning is the lack of realistic and standardized environments for training and testing AI agents. Previously, researchers often relied on ad-hoc lab environments. There have been recent advances in virtual systems built with 3D physics engines and photo-realistic rendering for indoor and outdoor environments, but the embodied agents in those systems can only conduct simple interactions with the world (e.g., walking around, moving objects, etc.). Most of the existing systems also do not allow human participation in their simulated environments. In this work, we design and implement a virtual reality (VR) system, VRKitchen, with integrated functions which i) enable embodied agents powered by modern AI methods (e.g., planning, reinforcement learning, etc.) to perform complex tasks involving a wide range of fine-grained object manipulations in a realistic environment, and ii) allow human teachers to perform demonstrations to train agents (i.e., learning from demonstration). We also provide standardized evaluation benchmarks and data collection tools to facilitate a broad use in research on task-oriented learning and beyond.