Interacting with Distant Objects in Augmented Reality

PubDate: August 2018

Teams: University of Colorado Boulder

Writers: Matt Whitlock; Ethan Harnner; Jed R. Brubaker; Shaun Kane; Danielle Albers Szafir

PDF: Interacting with Distant Objects in Augmented Reality

Abstract

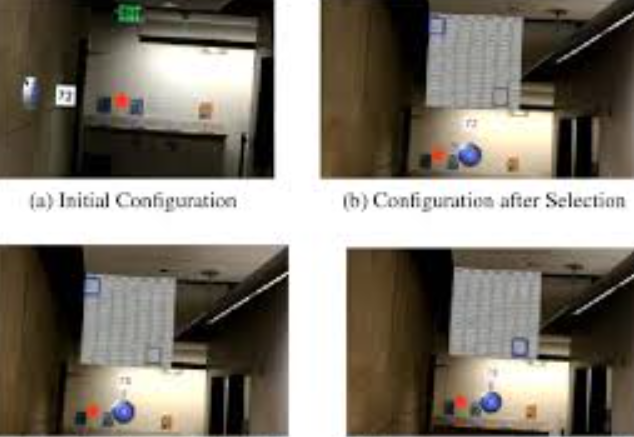

Augmented reality (AR) applications can leverage the full space of an environment to create immersive experiences. However, most empirical studies of interaction in AR focus on interactions with objects close to the user, generally within arms reach. As objects move farther away, the efficacy and usability of different interaction modalities may change. This work explores AR interactions at a distance, measuring how applications may support fluid, efficient, and intuitive interactive experiences in room-scale augmented reality. We conducted an empirical study (N = 20) to measure trade-offs between three interaction modalities-multimodal voice, embodied freehand gesture, and handhelds devices-for selecting, rotating, and translating objects at distances ranging from 8 to 16 feet (2.4m-4.9m). Though participants performed comparably with embodied freehand gestures and handheld remotes, they perceived embodied gestures as significantly more efficient and usable than device-mediated interactions. Our findings offer considerations for designing efficient and intuitive interactions in room-scale AR applications.