SalBiNet360: Saliency Prediction on 360° Images with Local-Global Bifurcated Deep Network

PubDate: May 2020

Teams: South China University of Technology

Writers: Dongwen Chen; Chunmei Qing; Xiangmin Xu; Huansheng Zhu

PDF: SalBiNet360: Saliency Prediction on 360° Images with Local-Global Bifurcated Deep Network

Abstract

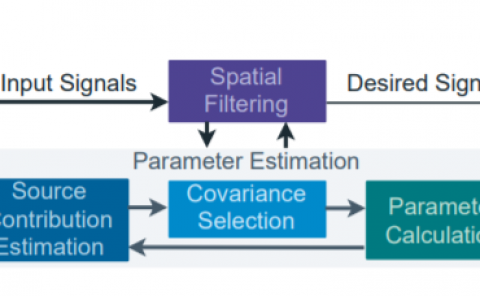

With the development of the virtual reality applications, predicting human visual attention on 360° images is valuable to content creators and encoding algorithms, and becomes essential to understand user behaviour. In this paper, we propose a local-global bifurcated deep network for saliency prediction on 360° images, which is named as SalBiNet360. In the global deep sub-network, multiple multi-scale contextual modules and a multilevel decoder are utilized to integrate the features from the middle and deep layers of the network. In the local deep sub-network, only one multi-scale contextual module and a single-level decoder are utilized to reduce the redundancy of local saliency maps. Finally, fused saliency maps are generated by linear combination of the global and local saliency maps. Experiments on two publicly available datasets illustrate that the proposed SalBiNet360 outperforms the tested state-of-the-art methods.