Audio-Visual-Olfactory Resource Allocation for Tri-modal Virtual Environments

PubDate: February 2019

Teams: University of Warwick;University of the West of England;Birmingham City University

Writers: E. Doukakis; K. Debattista; T. Bashford-Rogers; A. Dhokia; A. Asadipour; A. Chalmers; C. Harvey

PDF: Audio-Visual-Olfactory Resource Allocation for Tri-modal Virtual Environments

Abstract

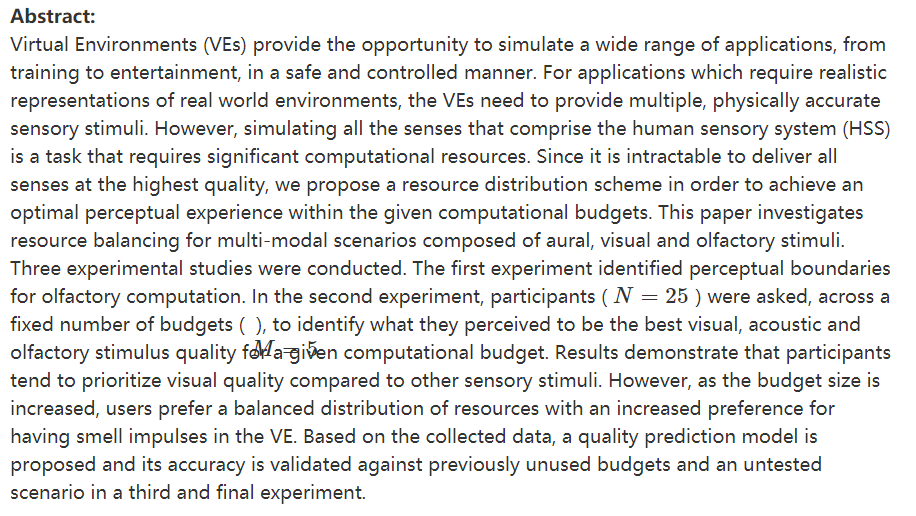

Virtual Environments (VEs) provide the opportunity to simulate a wide range of applications, from training to entertainment, in a safe and controlled manner. For applications which require realistic representations of real world environments, the VEs need to provide multiple, physically accurate sensory stimuli. However, simulating all the senses that comprise the human sensory system (HSS) is a task that requires significant computational resources. Since it is intractable to deliver all senses at the highest quality, we propose a resource distribution scheme in order to achieve an optimal perceptual experience within the given computational budgets. This paper investigates resource balancing for multi-modal scenarios composed of aural, visual and olfactory stimuli. Three experimental studies were conducted. The first experiment identified perceptual boundaries for olfactory computation. In the second experiment, participants ( N=25 ) were asked, across a fixed number of budgets ( M=5 ), to identify what they perceived to be the best visual, acoustic and olfactory stimulus quality for a given computational budget. Results demonstrate that participants tend to prioritize visual quality compared to other sensory stimuli. However, as the budget size is increased, users prefer a balanced distribution of resources with an increased preference for having smell impulses in the VE. Based on the collected data, a quality prediction model is proposed and its accuracy is validated against previously unused budgets and an untested scenario in a third and final experiment.