Natural Gestures to Interact with 3D Virtual Objects using Deep Learning Framework

PubDate: December 2019

Teams: IIIT Bhubaneswar

Writers: Suraj Tripathy; Rohan Sahoo; Ajaya Kumar Dash; Debi Prosad Dogra

PDF: Natural Gestures to Interact with 3D Virtual Objects using Deep Learning Framework

Abstract

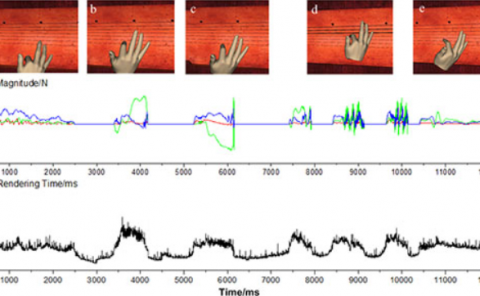

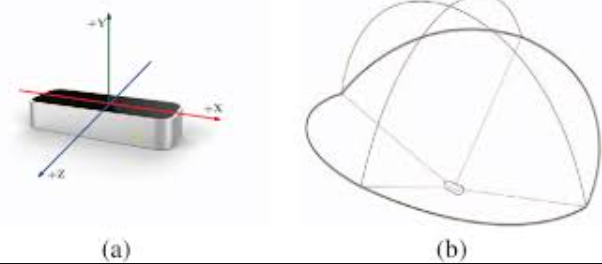

This paper presents a system for freehand interaction with 3D objects using gestures. A secondary contribution of this work is to present a system to recognize a set of suitable gestures which are used for manipulation of the 3D objects interactively, with the help of a deep learning framework using the 3D raw images captured via Leap motion interface. For this study, we have used our own dataset, a collection of images acquired using Leap motion controller comprises with six naturally occurring gestures. A deep learning framework built with CNN has been trained on this dataset and the validation accuracy has been found to be as high as 99%. This model is then used to predict the user’s gesture to interact with the 3D objects with bare hands. The application has been tried and assessed by ten subjects. The subjects had no prior experience on how to interact with Leap motion sensor, making it an interesting study to explore the possibility of its usage by mass.