EMG-Based Decoding of Manipulation Motions in Virtual Reality: Towards Immersive Interfaces

PubDate: December 2020

Teams: The University of Auckland

Writers: Anany Dwivedi; Yongje Kwon; Minas Liarokapis

PDF: EMG-Based Decoding of Manipulation Motions in Virtual Reality: Towards Immersive Interfaces

Abstract

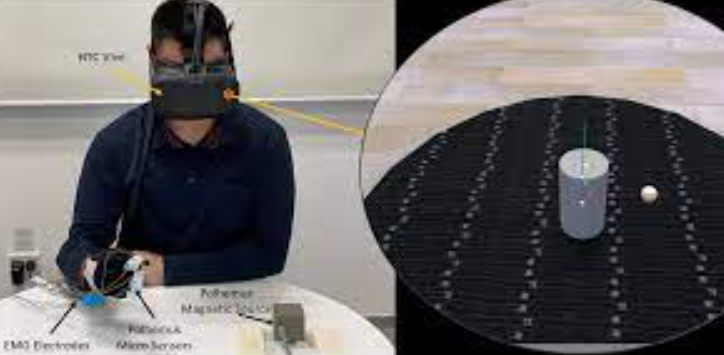

To facilitate the development of a new generation of Virtual Reality systems and their introduction in everyday life applications, new intuitive, immersive methods of interfacing have to be developed. Over the years, Electromyography (EMG) based interfaces have been utilized for unobtrusive interaction with computer systems. However, previous EMG studies have not explored the continuous decoding of the effects of human motion (e.g., manipulated object behavior) in simulated and virtual environments. In this work, we present an EMG based learning framework that can allow for an immersive interaction with Virtual Reality environments. To do that, EMG activations from the muscles of the forearm and the hand were acquired during the execution of object manipulation tasks in a virtual world along with the motion of the object. The virtual world was visualized using an HTC Vive VR headset, while the hand motions were tracked with a dataglove equipped with magnetic motion capture sensors. The object motion decoding was formulated as a regression problem using the Random Forests methodology. The study shows that the object motion can be successfully decoded using the EMG activations, despite the lack of haptic feedback.