Interactive Fusion of 360° Images for a Mirrored World

PubDate: August 2019

Teams: University of Maryland

Writers: Ruofei Du; David Li; Amitabh Varshney

PDF: Interactive Fusion of 360° Images for a Mirrored World

Abstract

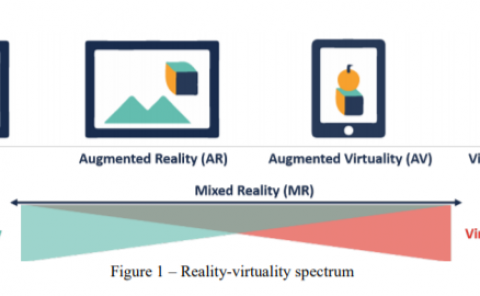

Reconstruction of the physical world in real time has been a grand challenge in computer graphics and 3D vision. In this paper, we introduce an interactive pipeline to reconstruct a mirrored world at two levels of detail. Given a pair of latitude and longitude coordinates, our pipeline streams and caches depth maps, street view panoramas, and building polygons from Google Maps and OpenStreetMap APIs. At a fine level of detail for close-up views, we render textured meshes using adjacent local street views and depth maps. When viewed from afar, we apply projection mappings to 3D geometries extruded from building polygons for a coarse level of detail. In contrast to teleportation, our system allows users to virtually walk through the mirrored world at the street level. We present an application of our approach by incorporating it into a mixed-reality social platform, Geollery, and validate our real-time strategies on various platforms including mobile phones, workstations, and head-mounted displays.