Real-Time 3D Face Reconstruction and Gaze Tracking for Virtual Reality

PubDate: August 2018

Teams: University of Chinese Academy of Sciences;Cardiff University

Writers: Shu-Yu Chen; Lin Gao; Yu-Kun Lai; Paul L. Rosin; Shihong Xia

PDF: Real-Time 3D Face Reconstruction and Gaze Tracking for Virtual Reality

Abstract

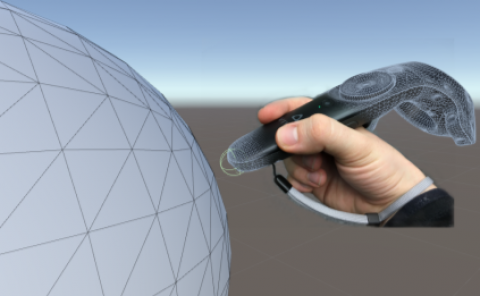

With the rapid development of virtual reality (VR) technology, VR glasses, a.k.a. Head-Mounted Displays (HMDs) are widely available, allowing immersive 3D content to be viewed. A natural need for truly immersive VR is to allow bidirectional communication: the user should be able to interact with the virtual world using facial expressions and eye gaze, in addition to traditional means of interaction. Typical application scenarios include VR virtual conferencing and virtual roaming, where ideally users are able to see other users’ expressions and have eye contact with them in the virtual world. Despite significant achievements in recent years for reconstruction of 3D faces from RGB or RGB- D images, it remains a challenge to reliably capture and reconstruct 3D facial expressions including eye gaze when the user is wearing VR glasses, because the majority of the face is occluded, especially those areas around the eyes which are essential for recognizing facial expressions and eye gaze. In this paper, we introduce a novel real-time system that is able to capture and reconstruct 3D faces wearing HMDs and robustly recover eye gaze. We demonstrate the effectiveness of our system using live capture and more results are shown in the accompanying video.